There's a scene in Dune where Paul Atreides has to ride the sandworm for the first time. It's massive, terrifying, and if you don't hook in right, it will swallow you whole. But if you hook in and learn to ride it, you can cross the desert faster than anyone thought possible.

That's AI right now. It's the sandworm.

The dominant narrative is fear. "AI will take all knowledge work jobs." "80% of roles will be automated." Every week, another apocalyptic headline. And I get it; it's scary, it's unknown, and it's moving fast.

But what if the doomers are wrong? Not about AI being powerful; they're right about that. Wrong about what it means. What if AI doesn't take your job, but instead breaks through the ceiling that's been holding entire industries back for decades? What if the real story isn't about loss, but abundance?

I believe it is. Here's why.

The Scaling Wall

Many industries have what I call a "scaling wall": a hard ceiling on output, not because there's no market need, but because you simply cannot train, hire, or scale enough humans to meet the demand. The bottleneck isn't customers. It's capacity.

The numbers are staggering.

Healthcare is projected to be short 187,000 physicians by 2038.1 Right now, 49% of doctors experience burnout multiple times per week. There are 302,000 fewer licensed practical nurses than we need. And the kicker: 44% of physicians are over 55. The pipeline cannot physically replace them fast enough. Meanwhile, when Northwestern Medicine deployed generative AI on radiology reports, they saw a 40% productivity boost across 24,000 reports in five months.2 AI isn't replacing radiologists; it's removing the administrative bottleneck so they can focus on the cases that actually need a human brain.

Cybersecurity has 4.76 million unfilled positions globally.3 Not projected. Right now. The gap is growing 19% year over year. 96% of cybersecurity professionals say AI will enhance their roles, not replace them. The demand was always there. We just couldn't train people fast enough.

Education is short 411,000 teachers; one in eight positions is either unfilled or staffed by someone without the right certification.4 UNESCO says the world needs 44 million more teachers by 2030. That's not a number you solve with recruiting. But Stanford found that AI tutoring improved student mastery by 4 percentage points (9 points for struggling students), and Carnegie Mellon measured learning gains of 0.8 standard deviations, nearly equivalent to high-quality human tutoring.5 AI tutoring doesn't eliminate teachers. It extends their reach. One teacher plus AI can serve more students than either could alone.

Legal services might be the most telling. 92% of civil legal problems for low-income Americans go completely unserved.6 Not poorly served. Unserved. At $300 an hour, anyone middle class or below is priced out. The demand for legal help is enormous. The supply can't scale because it takes 7+ years to train a lawyer and the economics don't work for lower-income clients. AI-powered legal chatbots are already operating in North Carolina, Nevada, and Missouri. Brazil's VICTOR AI evaluates Supreme Court appeals in seconds versus 44 minutes for a human clerk.7

Construction is short 350,000 workers every month.8 41% of the workforce is retiring by 2031, and for every five workers leaving, only two are entering. And here's the twist: AI isn't reducing the need for skilled tradespeople. Data center construction for AI infrastructure is increasing demand. Electrician roles are growing 9.5%. HVAC is up 8.1%.

I could keep going. The CPA pipeline is collapsing: 75% of CPAs are at or near retirement, and accounting degree completions are down 30%.9 Manufacturing faces 2.1 million unfilled jobs by 2030, costing $1 trillion in lost output.10

The pattern is the same everywhere: massive unmet demand, and the bottleneck is human capacity, not market need.

When AI lowers the scaling wall, the demand doesn't disappear. It floods in. More people get healthcare. More kids get tutoring. More small businesses get legal help. More homes get built. The humans doing that work aren't replaced; they're doing different work. Guidance instead of grunt work. Oversight instead of data entry. Complex problem-solving instead of repetitive tasks.

Latent demand, once unlocked, creates more jobs. Not fewer.

Ride the Sandworm

OK, so the opportunity is real. But here's the part that makes people uncomfortable: you have to actually ride the thing.

Every major technology wave has separated the riders from the swallowed. Kodak invented the digital camera in 1975 and refused to pursue it because it would cannibalize their film business. They collapsed. Blockbuster had 10,000 stores and a $5 billion market cap in 2004. Late fees were too profitable to give up. All stores closed by 2013. BlackBerry went from 50% of the U.S. smartphone market to less than 1%.

The pattern is always the same. It wasn't that these companies couldn't see the technology. They were afraid of disrupting what was working. Slow decisions. Internal resistance. A failure to ride the wave.

The AI adoption cliff is already forming. 78% of organizations used AI in 2024, up from 55% in 2023.11 The share of U.S. work hours using generative AI jumped from 4.1% to 5.7% in under a year. Companies that moved early are pulling away; JPMorgan's COIN platform does the equivalent of 360,000 staff hours of work annually. Insilico Medicine developed the first generative AI-designed drug in 18 months for $2.6 million. The traditional path? Six years and $400 million.12

You don't have to love the sandworm. You don't have to fully understand it yet. But you have to hook in. The individuals and organizations that learn to ride AI, imperfectly and awkwardly but at all, will be the ones still standing when the dust settles.

Tasks, Not Jobs

A while back, a couple of buddies and I worked on a startup that would essentially build a library of real-world data for training embodied AI in construction and manufacturing. Our mantra was "tasks, not jobs." It reframed the whole conversation.

Here's what I mean. There are many tasks that humans do right now that they don't want to do, or shouldn't be doing. Dangerous tasks: demolition, hazardous material handling. Tasks where humans just aren't reliable, like visual inspection of a part the 700th time it crosses your desk on a shift. Retrospective error rates in radiology are around 30%.13 That's not because radiologists are bad at their jobs. It's because fatigue is real, and repetition degrades performance. These are tasks AI should do.

But a "job" is different from a "task." A job has responsibility. Our entire world is built around it. Opportunities, risks, liability: where responsibility lies between a business and a consumer, or a business and another business, always has a person as the backstop. Someone, not something, has to own it.

McKinsey's November 2025 report found that AI agents could handle tasks representing 44% of U.S. work hours. But they were careful to say this is "not a forecast of job losses; it's an indication of how profoundly work could change."14 Stanford found that only 4% of occupations see AI touching more than 75% of their tasks. Most jobs have pockets of AI-automatable work; not wholesale replacement.

When you separate tasks from jobs, AI gets a lot less scary. The quality control inspector isn't eliminated; they shift from "inspect every item" to "supervise the AI inspector and validate the edge cases." The radiologist shifts from "read every image" to "review AI-flagged anomalies and make final diagnoses." The lawyer shifts from "review 12,000 contracts" to "AI-assisted research plus strategic judgment."

AI creates what some researchers call "responsibility gaps": situations where accountability gets murky because no one person made the decision. I plan to dig into that concept more deeply in a future post, but the takeaway for now is simple: AI systems are not legal entities. They cannot bear responsibility. When things go wrong, a human must own it. That's not a limitation; it's a structural reason humans are irreplaceable.

5x More Companies, Not 80% Job Loss

Instead of obsessing over how many jobs AI will eliminate, consider this: what if AI means 5x more companies starting?

The barrier to entrepreneurship is collapsing. API costs have dropped from $60 per million tokens to $2.50, in one year.15 App development costs are down up to 80%. A team of three developers built a complete business banking platform in six months. Pre-AI, that would have been 15-20 developers over 18 months. Top-performing AI-native startups are operating with teams 40% smaller than their non-AI peers, producing the same or better output.16

I've been passionate about this for years. I co-founded a boutique product development company called Anthroware and spent 12 years building products with small, obsessed teams. I wrote a whole series about why small teams win; the communication advantages alone are massive. Communication channels scale as n(n-1)/2. A team of 5 has 10 channels. A team of 10 has 45. A team of 20 has 190. It gets harder to nail the nuance of a great user experience as team size increases. Could you imagine a team of 300 cooking risotto?

AI supercharges this. Small teams that were already punching above their weight can now build things that used to require entire departments. 67,200 generative AI firms exist as of 2025, up from 50,000 in late 2023. 74% of entrepreneurs say AI is a core enabler of their startup.17 And this isn't just a Silicon Valley thing; more than 40% of ChatGPT's global traffic comes from middle-income countries like Brazil, India, and Indonesia. 159 AI startups across Africa have raised over $800 million.18

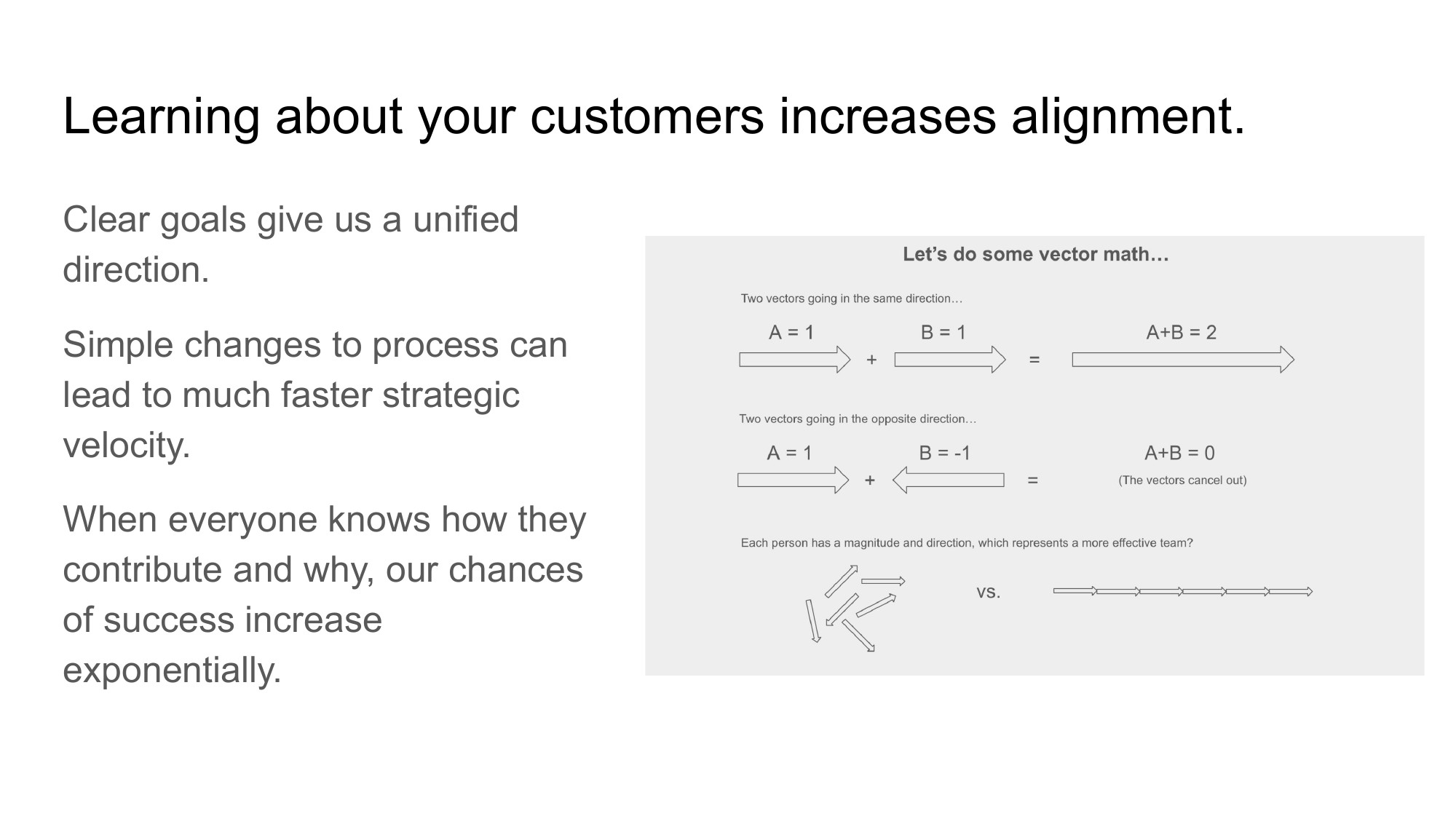

When everyone on the team knows how they contribute and why, the vectors add up. When they don't, they cancel out.

Think about the "vector alignment" framework I use; everyone on the team is represented by a vector. Vectors have a direction (where the team member thinks we're headed) and a magnitude (how much they contribute to that direction). When everyone on a small team knows how they contribute and why, the vectors all point in the same direction. Your chances of success increase exponentially. Add AI to that equation and you have a tiny team with the output of a mid-sized company, and the alignment of a founding crew sitting around a kitchen table.

The question isn't "will AI take 80% of jobs?" The question is "what happens when 5x more people can start companies?" I think the answer is abundance.

The Irreplaceable Human

Here's where I get a little philosophical. Bear with me.

AI is really good at building our thoughts faster. But they're still our thoughts. Once all the original, human-written content has been consumed, then reconsumed, then consumed again, photocopy of a photocopy of a photocopy. What happens?

It's called model collapse, and it's not hypothetical. A 2024 study published in Nature showed that when AI models train on AI-generated content, errors compound across generations.19 The diversity of the original data disappears. The fidelity degrades irreversibly. The Wharton School found that ChatGPT exhibits "plausible pastiche"; it sounds good, but it's derivative. It has fixation bias. Ideas cluster in conventional categories. Users of AI chatbots produce strikingly similar outputs.20

AI recombines. It doesn't pioneer.

Does AI understand God? Natural law? The nuances of the hard and unproven? Pioneers don't pattern-match against existing data. They just go. They build when people tell them not to. They have a vision and they doggedly pursue it. Alan Turing didn't recombine existing math; he formalized computation itself. Grace Hopper didn't iterate on existing tools; she invented the compiler. George de Mestral didn't run a market analysis; he looked at a burdock burr stuck to his pants and saw Velcro.

That's the human spirit. And here's the economics of it: when a needed resource becomes scarce, it usually becomes more valuable. If there are 1,000 or 10,000 AIs for every knowledge worker, or worse, if most people start letting AI think for them, write for them; then genuine human originality from those who still think for themselves becomes exponentially more valuable. Economist Julian Simon argued that human creativity is the ultimate economic resource. I think he's right.

Even when surrounded by an ocean of AI, we still need humans. In fact, we need them more.

So What Now?

The doomer narrative is loud. And it's wrong; not about AI being transformative, but about what that transformation means.

Industries are hitting scaling walls that only AI can lower. Adoption separates the riders from the swallowed. AI takes the tasks we shouldn't be doing while humans keep the responsibility that defines real work. The barrier to entrepreneurship is collapsing globally. And human originality, the real kind, is becoming more valuable by the day.

The future isn't AI versus humans. It's humans with AI, building more than either could alone.

Hook in. Ride the sandworm. The desert is wide open.

Hope this helps. Reach out if you'd like to chat about it.